Emerging Cybersecurity Wildcard: The Non-Linear Expansion of AI-enabled Supply Chain Vulnerabilities

This analysis explores a subtle yet plausible cybersecurity wildcard: AI-driven complexities within supply chain security creating non-linear risk escalations. Beyond known perimeter attacks, this signal highlights a layered systemic fragility that may materially alter capital flows, regulatory paradigms, and industrial structures over the coming two decades.

The rise of artificial intelligence (AI) in cybersecurity is broadly recognized for both defensive and offensive capability shifts. However, an underappreciated development is how AI’s diffusion into software supply chains and third-party ecosystems may trigger exponentially escalating vulnerabilities. This weak signal portends a structural inflection in how organizations, regulators, and insurers must address cybersecurity risk, fundamentally challenging established models built on linear risk assumptions. Capital deployment and governance may need to evolve from isolated threat management toward systemic resilience frameworks.

Signal Identification

This development qualifies as a weak signal with the potential to become an inflection indicator over a 10–20-year horizon. It is weak because, while AI in cybersecurity is heavily discussed, the complex, cascading impacts of AI-enabled vulnerabilities embedded in global digital supply chains are not yet a mainstream policy or investment focus. The plausibility is high due to accelerating AI adoption combined with increasing supply chain interdependencies and third-party reliance documented across sectors including technology, healthcare, finance, and government.

Sectors exposed include software and hardware supply chains, cloud infrastructure providers, critical infrastructure operators, cyber insurance markets, and regulatory bodies overseeing data protection and operational resilience. This signal points to systemic cyber risk amplification beyond conventional incident typologies (such as ransomware), implying a foundational shift in vulnerability landscapes.

What Is Changing

Samsung SDS’s identification of top cybersecurity threats highlights AI-driven attacks alongside ransomware and cloud misconfigurations (Samsung SDS 03/06/2026). However, integration of AI into defensive tooling and incident response also enhances attack surface visibility yet simultaneously introduces new complex dependencies (Government Contracts Law 04/06/2026).

Notably, ISC2 2026 cybersecurity projections emphasize third-party and supply chain vulnerabilities as critical, reflecting increasing difficulty in securing interconnected digital ecosystems (WWEX 05/06/2026). The growing interdependence on cloud services and third-party modules exposes organizations to opaque multilayered risks that are exacerbated when AI tools automate or recommend software deployment, potentially introducing AI-embedded vulnerabilities at scale.

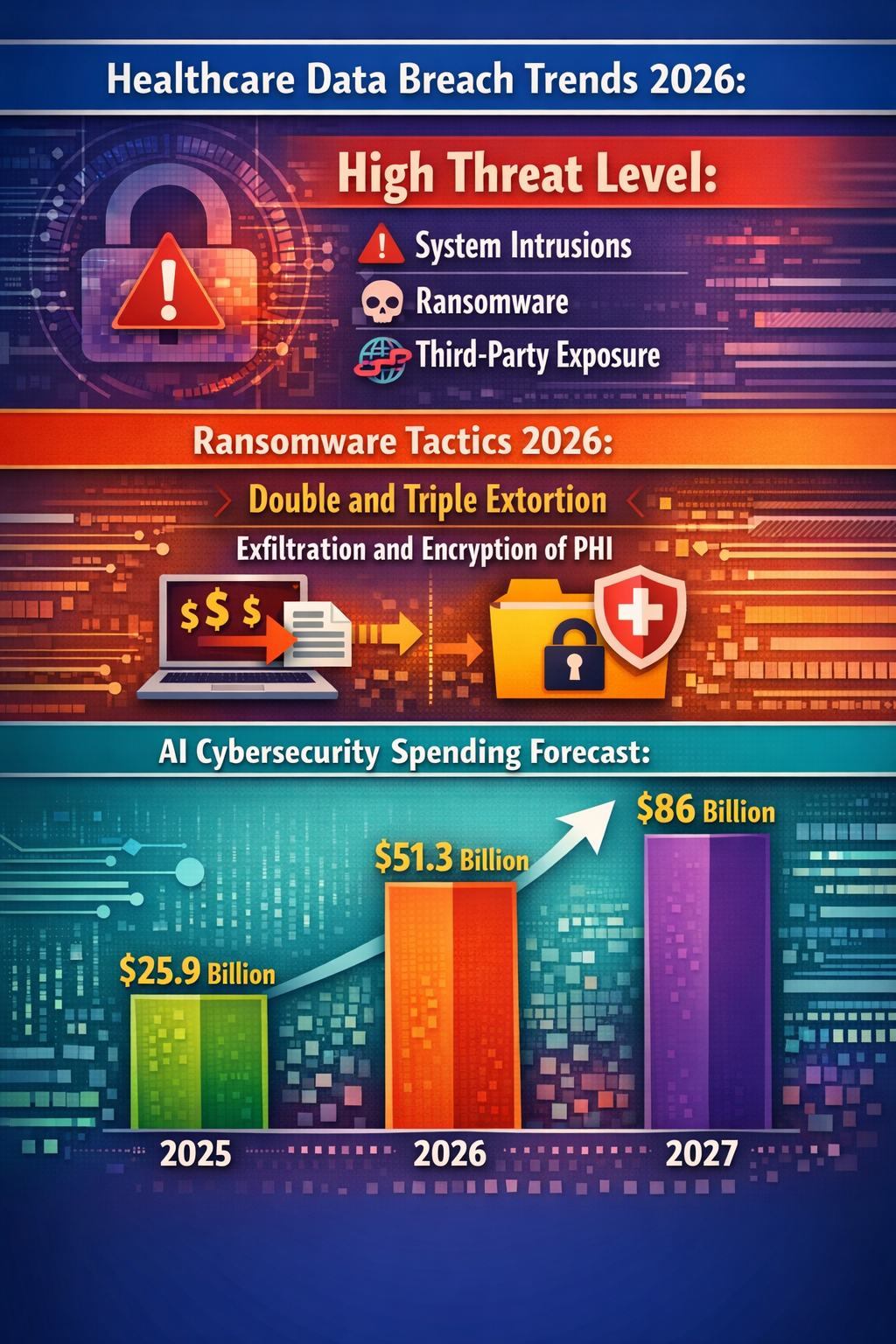

Gartner forecasts AI cybersecurity spending hitting $86 billion by 2027, evidencing accelerated capital shifts toward AI-enabled detection and response, but also raising infrastructure complexity and attack surface footprints (Webiano Digital 02/06/2026). Meanwhile, healthcare breaches highlight persistent third-party exposures that may worsen as AI-generated code composition and supply chain automation increase (Accountable HQ 01/06/2026).

A critical systemic difference is that modern AI tools can discover and exploit software vulnerabilities faster than current human or traditional automated methods (Council on Foreign Relations 31/05/2026). This dual-use characteristic means that AI not only strengthens defenses but also exponentially accelerates supply chain attack capabilities, creating complex, non-linear systemic risk propagation routes that are poorly modeled today.

Disruption Pathway

This wildcard may escalate as AI adoption deepens in software development lifecycles and supply chain orchestration. Organizations may accelerate AI integration to gain competitive advantages in vulnerability detection and automated patching, unintentionally increasing interdependencies and attack vectors. Supply chains running AI tools for code generation, configuration management, and operational decision support become attack multipliers.

As these AI systems autonomously make security decisions, small supply chain compromises (such as manipulated third-party code or flawed AI-generated patches) could cascade rapidly due to feedback loops amplifying effects across diverse interconnected platforms. These feedback loops might overwhelm existing control frameworks, creating systemic resilience failures not addressed by silo-based cybersecurity approaches.

Emerging stresses include trust erosion in widely used AI models embedded in supply chain operations; regulatory struggles to define liability across AI-software vendors, integrators, and end-users; and cyber insurance models strained by correlated loss potentials beyond typical actuarial assumptions (SWIF AI Blog 03/06/2026).

Structural adaptations may include redefinition of software provenance and enforceable transparency standards requiring explainable AI use in supply chains, coupled with multi-stakeholder governance regimes overseeing AI lifecycle security. Capital allocation could shift toward integrated risk management platforms combining AI threat intelligence with supply chain resilience analytics.

Should unchecked, this development might invert conventional defensive paradigms centered on perimeter and endpoint hardening, moving the field toward systemic, ecosystem-level control mechanisms, and new regulatory consent architectures balancing innovation and security.

Why This Matters

For policymakers, this implies an urgent need to rethink cybersecurity frameworks to incorporate controls over AI-generated supply chain risks and accountability structures spanning diverse vendors and software lifecycle phases. Relying solely on traditional compliance-based cybersecurity regulations may become obsolete as AI-enabled vulnerabilities transcend organizational boundaries.

Investors and capital allocators may need to prioritize funding cybersecurity ventures and infrastructures that embed systemic supply chain resilience, focusing on transparency, AI model governance, and emergent risk quantification rather than isolated intrusion detection. Cyber insurers could recalibrate risk models and underwriting criteria to account for increased tail risk from correlated AI-driven supply chain failures.

Strategically, industry leaders must assess supply chain partner risk holistically, integrating AI risk into vendor scoring and operational continuity planning. Failing to do so risks significant operational disruptions and reputational damage amplified by AI’s scale and speed of compromise potential.

Implications

This signal could likely transform cybersecurity into an ecosystem resilience discipline, embedding AI oversight as a core regulatory and industrial pillar. Capital flows might increasingly favour integrated, AI-audited supply chain security platforms over legacy point solutions.

Conversely, this development may not lead to broad structural upheaval if AI governance matures rapidly or if technical advances in AI explainability and transparency provide manageable risk envelopes. Competing interpretations may view AI-enhanced supply chains as primarily a tactical challenge, not a systemic vulnerability.

Still, this is not a conventional threat vector shift (e.g., from ransomware to phishing); rather, it represents a radical complexity growth in cyber risk architecture. Organizations ignoring the multilayered AI-enabled supply chain dimension risk investing in fragmented defenses that will not scale effectively.

Early Indicators to Monitor

- Emergence of industry-wide AI supply chain security standards and certification bodies

- Venture capital surges funding AI transparency and explainability tools in software supply chains

- Regulatory proposals mandating AI lifecycle audits across digital supplier networks

- Sharp rise in cyber insurance claims linked to multi-vendor AI toolchain compromise

- Procurement shifts favoring suppliers demonstrating AI governance and supply chain traceability

Disconfirming Signals

- Rapid breakthroughs in explainable AI that eliminate black-box vulnerabilities

- Stable or declining interconnectivity standards reducing third-party reliance

- Effective AI governance frameworks adopted by leading software ecosystems with broad compliance

- Absence of major AI-induced multi-vendor breaches despite expanded AI use

Strategic Questions

- How should regulatory frameworks evolve to assign liability and enforce transparency in AI-driven supply chains?

- What capital deployment strategies effectively hedge against systemic AI-enabled supply chain cyber risks?

Keywords

AI Cybersecurity; Supply Chain Risk; Cyber Insurance; Regulatory Governance; AI Governance; Systemic Risk

Bibliography

- Samsung SDS (the corporate IT security arm) has identified top 5 threats: AI-driven attacks, ransomware, cloud misconfigurations, phishing / account takeover, and data exfiltration. Risk Intelligence Service. Published 03/06/2026.

- Vendors offering AI-enabled defensive tooling, vulnerability detection, and incident response are positioned to capture new work through CISA's expanded cybersecurity tools and services channel and the Treasury-led clearinghouse. Government Contracts Law. Published 04/06/2026.

- ISC2's 2026 cybersecurity predictions identified supply chain security and third-party vulnerabilities as major concerns as companies rely more heavily on interconnected digital systems. WWEX. Published 05/06/2026.

- Gartner forecasts AI cybersecurity spending of $51.3 billion in 2026, nearly double the 2025 estimate of $25.9 billion, and rising to $86 billion in 2027. Webiano Digital. Published 02/06/2026.

- Healthcare data breach trends in 2026 underscore a persistently high threat level driven by system intrusions, ransomware, and third-party exposure. Accountable HQ. Published 01/06/2026.

- Modern AI tools are better than the best cybersecurity professionals at identifying critical vulnerabilities in secure software, potentially making them the world's most powerful hacking tools. Council on Foreign Relations. Published 31/05/2026.

- Cyber insurance and cybersecurity spending are increasingly intertwined as organizations face surging AI-driven threats and supply chain risk (World Economic Forum Global Cybersecurity Outlook 2026). SWIF AI Blog. Published 03/06/2026.